Google Earth KML Generator for Radio Networks

One thing that really bothers me sometimes is having the same data in multiple places, and having to manually update the same data more than once.Ā So I was bothered when a colleague recently spent a few days creating a google earth file for our communications network (>150 nodes), even though we had a spreadsheet of all the locations of each node already.Ā So, in a couple of hours I came up with this program which converts a spreadsheet or csv file into a kml file which can be imported into google earth.

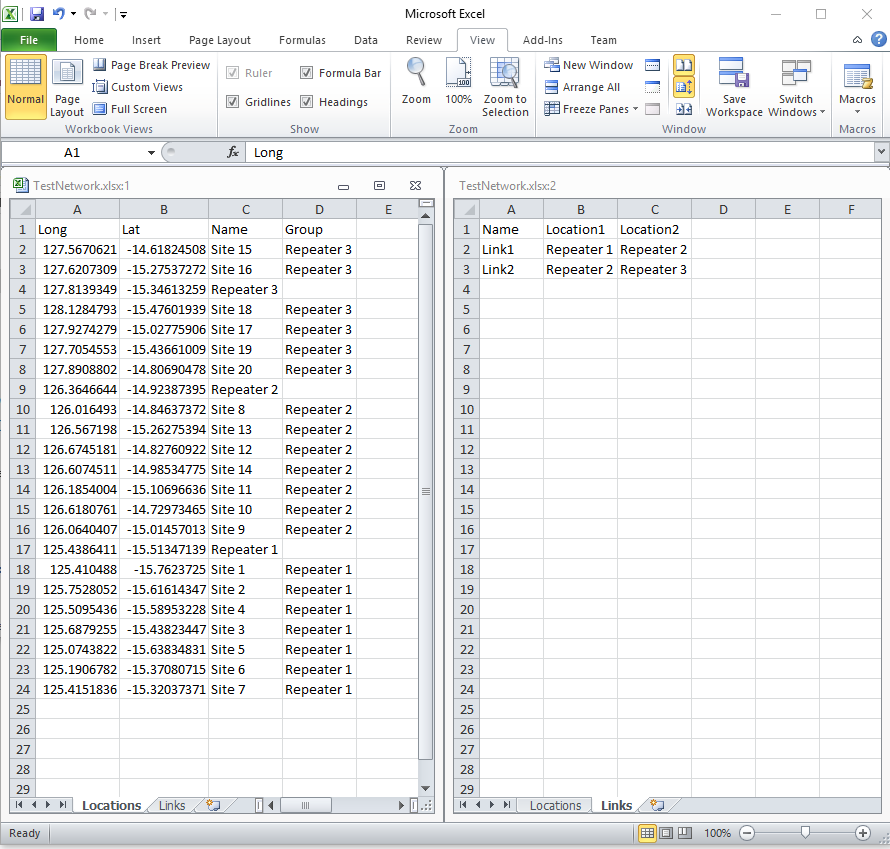

Take the following data (this is not a real network, I just picked a bunch of random points):

Drop the spreadsheet onto the CsvToKml program, and it spits out a kml file, which looks like this in Google Earth:

Now every time we update the spreadsheet all we need to do is feed it to CsvToKml and we get a new google earth file.